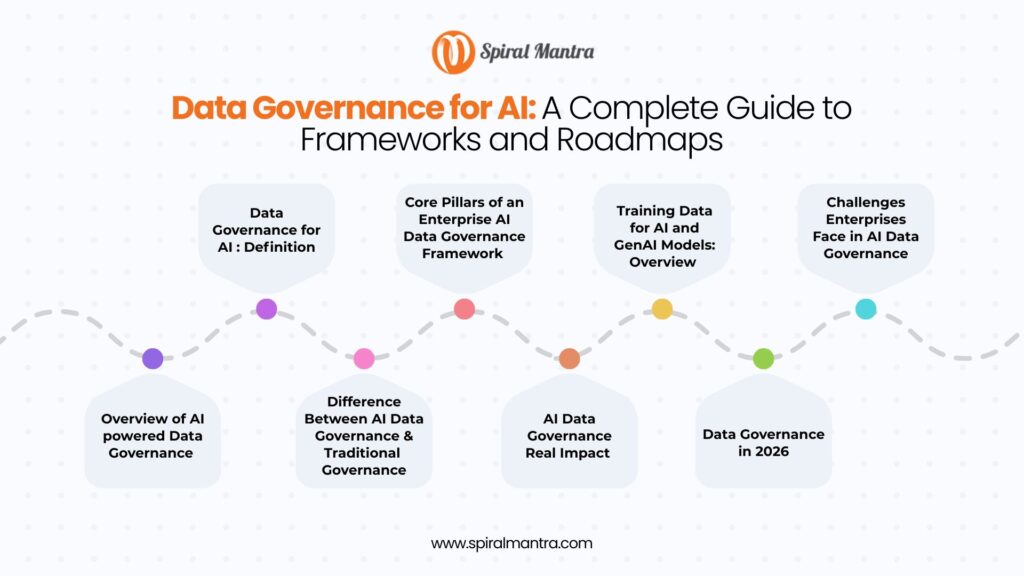

Overview of AI powered Data Governance Processes

In 2018, Amazon discontinued an AI recruitment tool that discriminated against female candidates because it was trained on resumes submitted over 10 years, mostly from men.

This reflects systemic industry gender bias and highlights how, without explainability, auditability, and proper data governance, AI issues can quickly escalate into reputational risks for businesses.

Our data engineering services expert helps CIOs, CTOs, GRC professionals, Machine Learning Experts, AI Specialists, and Compliance Officers understand why AI data governance is important.

Data Governance for AI : Definition

AI Data Governance is a set of policies, processes, and tools that ensures data used to train, test, and operate AI models is secure, unbiased, and compliant throughout their life cycle.

In simple words, it acts as a foundation to reduce risks like hallucination, data leakage, and discrimination.

For enterprises, this means governing not just what data exists, but how it is sourced, labeled, accessed, transformed, and consumed by machine learning, AI models, and agentic systems.

Importance of AI Governance for Enterprise Success

A common myth in enterprise AI is that data problems can be resolved later.

They cannot, and the consequences are no longer theoretical. Today, enterprises are already dealing with biased models on irrelevant data, compliance complications from uncontrolled PII flowing into large language models, and AI outputs that business heads hesitate to act upon.

2026 marks the diversion point. As the EU AI Act moves into its enforcement phase, expectations around data governance, transparency, and auditability are rising.

At the same time, as Agentic AI enters the scene, the gap between enterprises with AI data governance and those without is becoming hard to close.

Difference Between AI Data Governance & Traditional Governance

Traditional data governance was always meant to be predictable: referring to structured data, analysis by analysts, and immeasurable compliance requirements. Over the years, AI has broken these assumptions.

On the other hand, AI Data Governance is meant to handle large volumes of unstructured data. This could be anything, right from text, images, audio, to video, that traditional governance models are not trained to manage.

The future is clear: that data & AI Governance are now critical to businesses, and not implementing one can create roadblocks difficult to manage.

Core Pillars of an Enterprise AI Data Governance Framework

An enterprise AI Governance Framework is built on a solid foundation where every insight is explainable, auditable, and accountable.

In 2026, ‘having data’ is no longer enough; you must prove the usability of the data every step of the way. Here’s a list of core pillars that form the foundation of AI models:

Usable data quality: Good quality data is not the same as quality. An AI model requires data that is accurate, complete, current, and relevant to the specific use case it is being trained on. Otherwise, it may produce results that are not only wrong but also disastrous.

Data lineage and origin: Every data point that enters the training pipeline must be traceable from start to finish. Data lineage refers to the system by which businesses can audit model behavior, look into bias complaints, and reproduce model outputs when required. Today, this is non-negotiable, and not having this can feel like targeting arrows in the dark.

Data security, privacy, and PII: PII(personally identifiable information) entering LLM training pipelines means exposure under GDPR, CCPA, and the EU AI Act. Therefore, enterprise-grade governance requires automated PII detection and redaction at the pipeline level, RBAC (role-based access control), consent management frameworks that track what data was collected, for what purpose, and whether it's of use.

Metadata management, cataloging: As agentic AI systems begin to query data autonomously, the quality of the metadata layer becomes as important as the quality of the data itself. This means datasets should be discoverable, their lineage documented, and provision of semantic context for both AI and humans.

Compliance measures: Providers of high-risk AI systems must ensure compliance is followed throughout the system’s lifecycle. This includes documenting the risk management system, placing the right data governance measures, detailed technical documentation, automatic logging, and a human overview.

AI Data Governance Real Impact

Most businesses think data governance is unnecessary unless it starts slowing down AI initiatives. This is exactly what happened for a leading US-based software development company operating on a legacy Hive Metastore.

Limited visibility into data lineage, fragmented ownership of datasets, and inconsistent access controls were not just operational challenges but barriers to using AI fully.

By migrating to Databricks Unity Catalog, we moved their systems from reactive governance to a unified, enterprise-grade control layer. This shift delivered immediate business outcomes. Their data teams gained end-to-end visibility into upstream and downstream data flows, reducing the extra time required to document lineage and validate data for AI models.

Central ownership replaced fragmented control, removed duplication, and improved data reliability.

More importantly, governance became actionable. Features like fine-grained access monitoring and secure data sharing helped our client control who accesses data as well as use it across teams and environments.

What was once a manual, resource-intensive process has now turned into a productivity multiplier by reducing effort by up to 60%, using automation frameworks like UCX.

Training Data for AI and GenAI Models: Overview

Training data is where most governance initiatives fail. Bias, toxicity, PII, and intellectual property exposure all enter AI systems through training data. And by the time one notices it, a biased or contaminated model is in production!!

The practical governance requirements for training data are specific: check data quality before it enters training pipelines; maintain an uncorrupted record of every training dataset used for every model; document the origin of synthetic data, including what biases it may have, and whether it accurately represents the real-world distribution.

For generative AI and LLM deployments, the stakes are high. According to our AI engineers, even small volumes of incorrect data in a training corpus can compromise its potential, meaning pre-training data check is not just a task but a crucial activity.

Overview of Data Governance in 2026

The regulatory rules for AI data governance have moved from advisory to enforceable in the span of eighteen months. Some of the key changes that we might see in action soon are proper data governance, technical documentation, transparency, human involvement, and cybersecurity.

And what does this mean for businesses involved in financial services, healthcare, and critical infrastructure?

Our experts at Spiral Mantra believe that the signal is clear; practical implementation of data governance is essential now. And businesses that want to stay ahead must stay flexible for these changing waves.

Challenges Enterprises Face in AI Data Governance

From data fragmentation across different business units to manual governance processes, the challenges in enterprise AI data governance are both organizational and technical. Talent gap is another issue that businesses face.

Data governance and AI are both specialized disciplines, and leaders who get both are rare. And this is the reason why businesses that treat governance as a compliance function continuously underperform.

Therefore, to sum it all up, ungoverned AI produces output that most business leaders don't dare to trust, and compliance issues halt these entirely.

That's why at our data analytics solutions company, we believe in starting where the risk is highest. Apart from that, establishing cross-functional ownership is important, and so is executing in phases.

Sources

Amazon: https://www.bbc.com/news/technology-45809919

EU AI Act: https://www.europarl.europa.eu/topics/en/article/20230601STO93804/eu-ai-act-first-regulation-on-artificial-intelligence#:~:text=it%20is%20used-,What%20Parliament%20wanted%20in%20AI%20legislation,automation%2C%20to%20prevent%20harmful%20outcomes.